Is it possible to predict whether someone will commit a crime some time in the future?

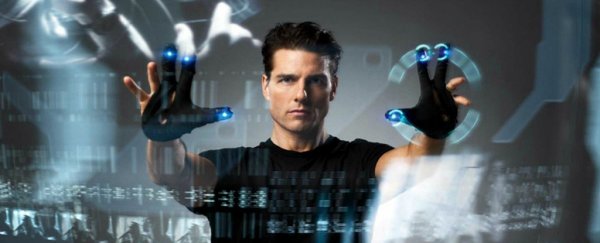

It sounds like an idea from the 2002 science-fiction movie Minority Report, but that's what statistical researcher Richard Berk, from the University of Pennsylvania, hopes to find out from work he's carried out this year in Norway.

The Norwegian government collects massive amounts of data about its citizens and associates it with a single identification file. Berk hopes to crunch the data from the files of children and their parents to see if he can predict from the circumstances of their birth whether a child will commit a crime before their 18th birthday.

The problem here is that newborn babies haven't done anything yet. The possible outcome of Berk's experiment would be to pre-classify some children as 'likely criminals' based on nothing more than the circumstances of their birth.

This could be the first step in making Minority Report a reality, where people could be condemned for crimes they haven't even committed.

The art of prediction

Berk's work is based on machine learning. This involves data scientists designing algorithms that teach computers to identify patterns in large data sets. Once the computer can identify patterns, it can apply its findings to predict outcomes, even from data sets it has never seen before.

For example, the US retail giant Target collected data about the shopping habits of its customers and used machine learning to predict what customers were likely to buy and when.

But it got into hot water in 2012 when it accurately used its pregnancy-prediction model to predict the pregnancy of a high school student in Minnesota.

It is hardly surprising, given the potential use of machine learning to avoid crime, that the field of criminology has turned to machine learning in an attempt to predict human behaviour. It has already been used, for example, to predict whether an offender is likely to be a recidivist.

Predicting criminal behaviour

The ability to use machine learning to inform risk assessments in the criminal justice system has been a focus for Berk for a long time now.

For example, earlier this year he looked at whether a person on bail for alleged domestic violence offences was likely to commit another offence before their next court date.

Whether the algorithms used in machine learning can accurately predict human behaviour is dependent on having as much contextual data as possible. Target used metadata from shopping routines. Berk, on the other hand, uses predictors specific to crime and demographics.

This includes the number of prior arrests of a person, age of first arrest, type of crime or crimes committed and number of prior convictions. It also includes prison work performance, proximity to high-crime neighbourhood, IQ and gender.

In some of his studies Berk has used as many as 36 predictors.

Low-risk and high-risk individuals

In each of Berk's experiments, the algorithm was able to predict quite accurately who would be a low-risk individual.

For example, he identified 89 percent of those people unlikely to commit domestic violence, 97 percent of inmates unlikely to commit serious misconduct in prison and 99 percent of past offenders unlikely to commit a homicide offence.

The trouble, though, is that the algorithm was nowhere near as accurate in predicting who would be a high-risk individual. There was only:

- a 9 percent accuracy in predicting which inmates engage in serious misconduct

- a 7 percent accuracy in predicting which offenders on parole or under community supervision would commit a homicide offence

- a 31 percent accuracy in predicting which defendants on bail for domestic violence offences were likely to commit another offence before their next court date.

There are two ways of using the results of Berk's experiments. First, we could divert resources away from low-risk individuals. This might involve placing less onerous supervision conditions on domestic violence defendants who are at low risk of committing another offence.

Alternatively, we could target more resources towards high-risk individuals. This might involve placing inmates at high risk of serious misconduct into higher-security prisons.

But there are two apparent issues with using the data to target high-risk individuals. First, there has been relatively little success in predicting who actually does pose a risk (in comparison to predicting who does not pose a risk), a limitation that Berk himself concedes.

Second, our criminal justice system is premised on the idea that people have free will and might still make the right choice to not commit a crime, even if they only do so at the last possible moment.

How is all this different to what the Australian criminal justice system does when it makes predictions about high-risk individuals?

A lot of the justice system's work already involves spending a good deal of time making educated guesses about whether someone is an unacceptable threat to public safety or poses a high risk of future danger.

These assessments contribute to officials' decisions about whether to grant bail, whether to grant parole and how harshly a person should be sentenced.

Prime Minister Malcolm Turnbull recently asked states and territories to push for new legislation to allow 'high risk' offenders convicted of terrorism offences to be held in detention even after their sentences have been served.

Generally, though, such decisions are based primarily on past behaviours of a particular individual, not data about past behaviours of other individuals.

Using predictive behavioural tools to decide whether (or for how long) someone should be in prison, based on something that has not yet even happened, would represent a substantial philosophical shift.

We would no longer consider people to be innocent until proven guilty, but would instead see them as guilty by reason of destiny.

Paul McGorrery, PhD Candidate (Criminal Law), Deakin University and Dawn Gilmore, Ph.D Candidate in Sociology and Learning, Swinburne University of Technology.

This article was originally published by The Conversation. Read the original.

![]()