As we speak, our brains choreograph an intricate dance of muscles in our mouths and throats to form the sounds that make up words. This complex performance is reflected in the electrical signals sent to speech muscles.

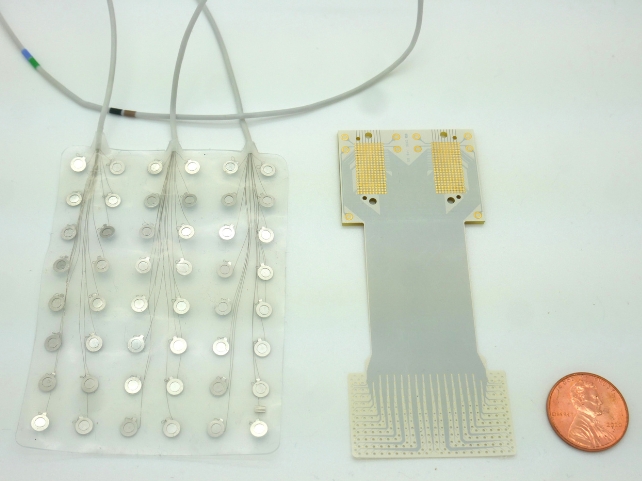

In a new breakthrough, scientists have now crammed a huge array of tiny sensors into a space no larger than a postage stamp, to read this complex mix of electrical signals, in order to predict the sounds a person is trying to make.

The 'speech prosthetic' opens the door to a future where people unable to speak due to neurological conditions can communicate through thought.

Your initial reaction might be to assume it reads minds. More accurately – the sensors detect which muscles we want to move in our lips, tongue, jaw, and larynx.

"There are many patients who suffer from debilitating motor disorders, like ALS (amyotrophic lateral sclerosis) or locked-in syndrome, that can impair their ability to speak," says co-senior author, neuroscientist Gregory Cogan from Duke University.

"But the current tools available to allow them to communicate are generally very slow and cumbersome."

Similar recent technology decodes speech at about half the average speaking rate. The team thinks their technology should improve the delay as it fits more electrodes on a tiny array to record more precise signals, though work needs to be done before the speech prosthetic can be made available to the public.

"We're at the point where it's still much slower than natural speech, but you can see the trajectory where you might be able to get there," co-senior author and Duke University biomedical engineer Jonathan Viventi said in September.

The researchers constructed their electrode array on medical-grade, ultrathin flexible plastic, with electrodes spaced less than two millimeters apart that can detect specific signals even from neurons extremely close together.

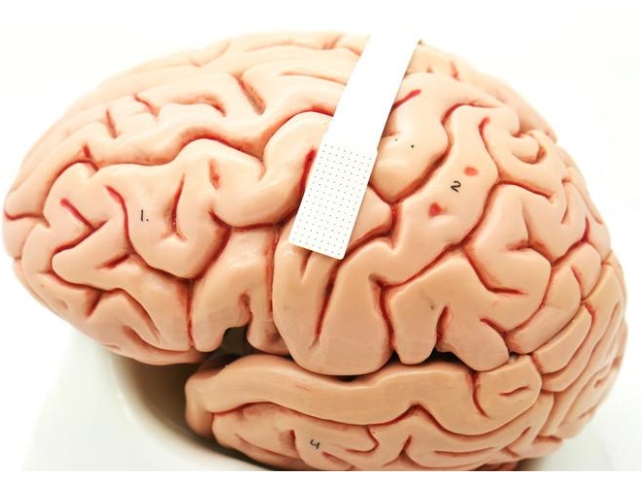

To test how useful these micro-scale brain recordings are for speech decoding, they temporarily implanted their device in four patients without speech impairment.

Seizing the opportunity while the patients were having surgery – three of them for movement disorders and one to remove a tumor – they had to make it quick.

"I like to compare it to a NASCAR pit crew," Cogan says. "We don't want to add any extra time to the operating procedure, so we had to be in and out within 15 minutes.

"As soon as the surgeon and the medical team said 'Go!' we rushed into action and the patient performed the task."

While the tiny array was implanted, the team was able to record activity in the brain's speech motor cortex that signals to speech muscles while patients repeated 52 meaningless words. The 'non-words' included nine different phonemes, the smallest units of sound that create spoken words.

The recordings showed phonemes elicited different patterns of signal firing, and they noted these firing patterns occasionally overlapped one another, kind of like the way that musicians in an orchestra blend their notes. This suggests our brains dynamically adjust our speech in real time as the sounds are being made.

Duke University biomedical engineer Suseendrakumar Duraivel used a machine learning algorithm to evaluate the recorded information to determine how well brain activity could predict future speech.

Some sounds were predicted with 84 percent accuracy, particularly if the sound made the start of a non-word, like 'g' in gak. Accuracy varied and dropped in more complicated situations, like for phonemes in the middle and end of non-words, and overall the decoder had an average accuracy rate of 40 percent.

This is based on just a 90-second sample of data from each participant, impressive considering existing technology needs hours of data to decode.

A substantial grant from the National Institutes of Health has been awarded to support further research and fine-tuning the technology as a result of this promising start.

"We're now developing the same kind of recording devices, but without any wires," says Cogan. "You'd be able to move around, and you wouldn't have to be tied to an electrical outlet, which is really exciting."

The study has been published in Nature Communications.