Human societies are so prosperous mostly because of how altruistic we are. Unlike other animals, people cooperate even with complete strangers.

We share knowledge on Wikipedia, we show up to vote, and we work together to responsibly manage natural resources.

But where do these cooperative skills come from and why don't our selfish instincts overwhelm them?

Using a branch of mathematics called evolutionary game theory to explore this feature of human societies, my collaborators and I found that empathy – a uniquely human capacity to take another person's perspective – might be responsible for sustaining such extraordinarily high levels of cooperation in modern societies.

Social rules of cooperation

For decades scholars have thought that social norms and reputation can explain much altruistic behavior. Humans are far more likely to be kind to individuals they see as "good", than they are to people of "bad" reputation.

If everyone agrees that being altruistic toward other cooperators earns you a good reputation, cooperation will persist.

This universal understanding of whom we see as morally good and worthy of cooperation is a form of social norm – an invisible rule that guides social behavior and promotes cooperation.

A common norm in human societies called "stern judging", for instance, rewards cooperators who refuse to help bad people, but many other norms are possible.

This idea that you help one person and someone else helps you is called the theory of indirect reciprocity. However, it's been built assuming that people always agree on each others' reputations as they change over time. Moral reputations were presumed to be fully objective and publicly known.

Imagine, for instance, an all-seeing institution monitoring people's behavior and assigning reputations, like China's social credit system, in which people will be rewarded or sanctioned based on "social scores" calculated by the government.

But in most real-life communities, people often disagree about each others' reputations. A person who appears good to me might seem like a bad individual from my friend's perspective.

My friend's judgment might be based on a different social norm or a different observation than mine. This is why reputations in real societies are relative – people have different opinions about what is good or bad.

Using biology-inspired evolutionary models, I set out to investigate what happens in a more realistic setting. Can cooperation evolve when there are disagreements about what is considered good or bad?

To answer this question, I first worked with mathematical descriptions of large societies, in which people could choose between various types of cooperative and selfish behaviors based on how beneficial they were.

Later I used computer models to simulate social interactions in much smaller societies that more closely resemble human communities.

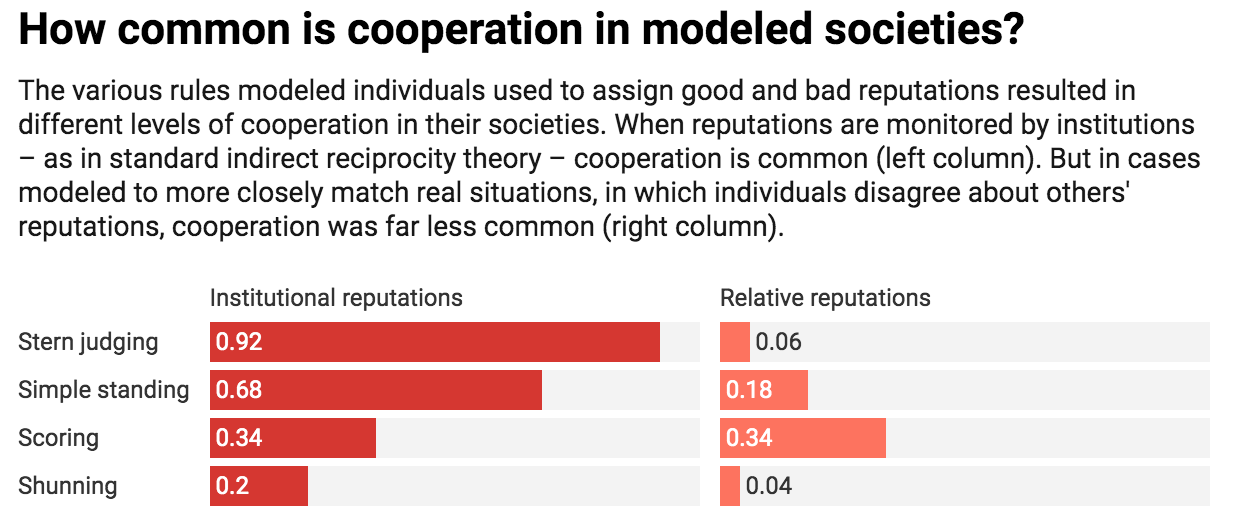

The results of my modeling work were not encouraging: Overall, moral relativity made societies less altruistic. Cooperation almost vanished under most social norms. This meant that most of what was known about social norms promoting human cooperation may have been false.

(Radzvilavicius et al, eLife, 2019)

(Radzvilavicius et al, eLife, 2019)

Evolution of empathy

To find out what was missing from the dominant theory of altruism, I teamed up with Joshua Plotkin, a theoretical biologist at the University of Pennsylvania, and Alex Stewart at the University of Houston, both experts in game theoretical approaches to human behavior.

We agreed that my pessimistic findings went against our intuition – most people do care about reputations and about the moral value of others' actions.

But we also knew that humans have a remarkable ability to empathetically include other people's views when deciding that a certain behavior is morally good or bad.

On some occasions, for instance, you might be tempted to judge an uncooperative person harshly, when you really shouldn't if from their own perspective, cooperation was not the right thing to do.

This is when my colleagues and I decided to modify our models to give individuals the capacity for empathy – that is, the ability to make their moral evaluations from the perspective of another person.

We also wanted individuals in our model to be able to learn how to be empathetic, simply by observing and copying personality traits of more successful people.

When we incorporated this type of empathetic perspective-taking into our equations, cooperation rates skyrocketed; once again we observed altruism winning over selfish behavior.

Even initially uncooperative societies in which everyone judged each other based mostly on their own selfish perspectives, eventually discovered empathy – it became socially contagious and spread throughout the population. Empathy made our model societies altruistic again.

Moral psychologists have long suggested that empathy can act as social glue, increasing cohesiveness and cooperation of human societies.

Empathetic perspective-taking starts developing in infancy, and at least some aspects of empathy are learned from parents and other members of the child's social network.

But how humans evolved empathy in the first place remained a mystery.

It is incredibly difficult to build rigorous theories about concepts of moral psychology as complex as empathy or trust.

Our study offers a new way of thinking about empathy, by incorporating it into the well-studied framework of evolutionary game theory. Other moral emotions like guilt and shame can potentially be studied in the same way.

I hope that the link between empathy and human cooperation we discovered can soon be tested experimentally. Perspective-taking skills are most important in communities where many different backgrounds, cultures and norms intersect; this is where different individuals will have diverging views on what actions are morally good or bad.

If the effect of empathy is as strong as our theory suggests, there could be ways to use our findings to promote large-scale cooperation in the long term – for instance, by designing nudges, interventions and policies that promote development of perspective-taking skills or at least encourage considering the views of those who are different. ![]()

Arunas L. Radzvilavicius, Postdoctoral Researcher of Evolutionary Biology, University of Pennsylvania.

This article is republished from The Conversation under a Creative Commons license. Read the original article.