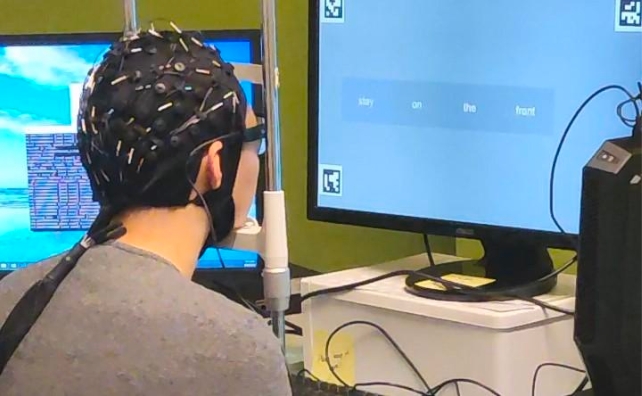

A world-first, non-invasive AI system can turn silent thoughts into text while only requiring users to wear a snug-fitting cap.

The Australian researchers who developed the technology, called DeWave, tested the process using data from more than two dozen subjects.

Participants read silently while wearing a cap that recorded their brain waves via electroencephalogram (EEG) and decoded them into text.

With further refinement, DeWave could help stroke and paralysis patients communicate and make it easier for people to direct machines like bionic arms or robots.

"This research represents a pioneering effort in translating raw EEG waves directly into language, marking a significant breakthrough in the field," says computer scientist Chin-Teng Lin from the University of Technology Sydney (UTS).

Although DeWave only achieved just over 40 percent accuracy based on one of two sets of metrics in experiments conducted by Lin and colleagues, this is a 3 percent improvement on the prior standard for thought translation from EEG recordings.

The goal of the researchers is to improve accuracy to around 90 percent, which is on par with conventional methods of language translation or speech recognition software.

Other methods of translating brain signals into language require invasive surgeries to implant electrodes or bulky, expensive MRI machines, making them impractical for daily use – and they often need to use eye-tracking to convert brain signals into word-level chunks.

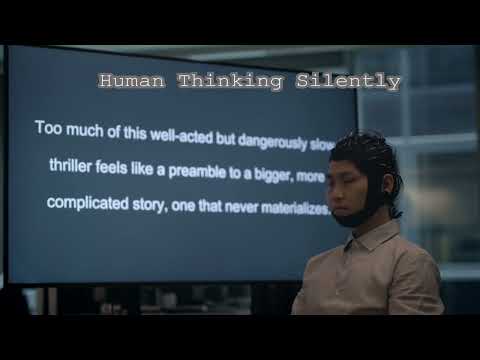

When a person's eyes dart from one word to another, it's reasonable to assume that their brain takes a short break between processing each word. Raw EEG wave translation into words – without eye tracking to indicate the corresponding word target – is harder.

Brain waves from different people don't all represent breaks between words quite the same way, making it a challenge to teach AI how to interpret individual thoughts.

After extensive training, DeWave's encoder turns EEG waves into a code that can then be matched to specific words based on how close they are to entries in DeWave's 'codebook'.

"It is the first to incorporate discrete encoding techniques in the brain-to-text translation process, introducing an innovative approach to neural decoding," explains Lin.

"The integration with large language models is also opening new frontiers in neuroscience and AI."

Lin and his team used trained language models that included a combination of a system called BERT with GPT, and tested it on existing datasets of people who had eye tracking and brain activity recorded while reading text.

This helped the system learn to match brain wave patterns with words, then DeWave was trained further with an open-source large language model that essentially makes sentences out of the words.

Translating verbs is where DeWave performed best. Nouns, on the other hand, tended to be translated as pairs of words that mean the same thing rather than exact translations, like "the man" instead of "the author."

"We think this is because when the brain processes these words, semantically similar words might produce similar brain wave patterns," says first author Yiqun Duan, a computer scientist from UTS.

"Despite the challenges, our model yields meaningful results, aligning keywords and forming similar sentence structures."

The relatively large sample size tested addresses the fact that people's EEG wave distributions vary greatly, suggesting that the research is more reliable than earlier technologies that have only been tested on very small samples.

There's more work to be done, and the signal is rather noisy when EEG signals are received through a cap instead of electrodes implanted in the brain.

"The translation of thoughts directly from the brain is a valuable yet challenging endeavor that warrants significant continued efforts," the team writes.

"Given the rapid advancement of Large Language Models, similar encoding methods that bridge brain activity with natural language deserve increased attention."

The research was presented at the NeurIPS 2023 conference, and a preprint is available on ArXiv.