Table tennis is one of the most skill-intensive sports on the planet – and engineers have now built a robot capable of besting some of the world's top players.

Its name is Ace, and against 'elite' amateur players who practice the sport for an average of 20 hours a week, the Sony AI robot won three out of five matches. It marks one of the strongest real-world demonstrations so far of a robot reaching high-level play in a fast, interactive sport.

This represents a pretty major robotics breakthrough: It's a system that combines high-speed sensing, AI decision-making, and robotic control to compete with human players in real-world conditions – making hair-trigger reactions in real time.

"This research has shown that an autonomous robot can, in fact, win at a competitive sport, matching or exceeding the reaction time and decision making of humans in a physical space," says roboticist Peter Dürr, director of Sony AI in Zürich, and project lead for Ace.

"Table tennis is a game of enormous complexity that requires split-second decisions as well as speed and power. This research breakthrough highlights the potential of physical AI agents to perform real-time interactive tasks, and represents a significant step toward creating robots with broader applications in fast, precise, and real-time human interactions."

Many AI systems have shown that machine learning can succeed at virtual challenges, from a simple game of Pong – Atari's famous video game with two paddles – to more complex games of strategy such as chess, Go, and StarCraft II.

Physical games in the meatspace are exponentially harder to conquer using artificial systems. A robot must perceive unpredictable changes in the external environment, interpret what those changes mean, decide how to react, and then perform the necessary action – all in the blink of an eye.

Ace builds on previous work by the team at Sony AI, an agent named Gran Turismo Sophy that can outrace human players in the video game Gran Turismo. However, Ace is obviously far more complex.

Its design consists of three main parts. First is its perception system that allows it to see and track the ball. Crucially, this includes the ability to detect the ball's spin, which can change how the ball bounces and its trajectory through the air. Previous table tennis robots often struggled to account for spin, despite its importance in real play.

The second major component is an AI 'brain' trained by deep reinforcement learning, taking shot after shot in simulated, virtual games to learn by trial and error what works and what doesn't. This means that the system can make decisions in the moment, rather than relying on pre-programmed presets.

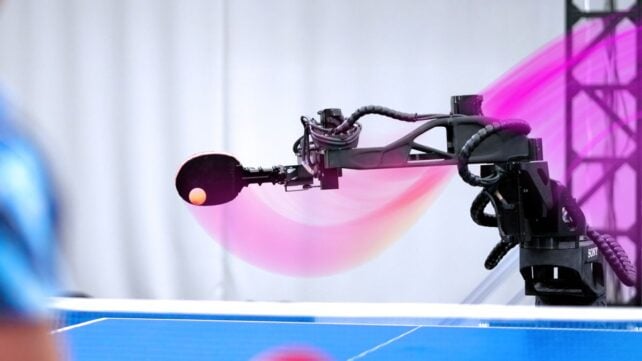

Finally, high-speed robotic hardware: an eight-jointed, highly agile robotic arm that can execute the decisions about where and how to place the bat with precision and speed.

To put Ace through its paces, Sony AI pitted the robot against seven human players – best-of-three matches against five elite amateurs who have been playing for more than a decade and practice heavily and consistently, and best-of-five matches against two professional Japanese league players, Minami Ando and Kakeru Sone.

It played a total of 13 games against the elite players and won seven times, for a total of three match wins. Against the professionals, its techniques were not quite as effective: Ace won just one game out of seven played, and ultimately lost both matches.

But its performance exceeds that of previous table tennis robots, reaching a level that can compete with high-level human players.

Analysis of Ace's games showed that the spin detection may be key. The robot scored points not by hitting harder than its human opponents but because it had masterful control, successfully returning 75 percent of spinning balls across a wide range of spins.

It also won multiple direct points on serves and performed several maneuvers that surprised human observers.

As the researchers note in their paper, table tennis expert and former Olympian Kinjiro Nakamura observed on seeing one of Ace's shot that: "No one else would have been able to do that. I didn't think it was possible. But the fact that it was possible … means that there is a possibility that a human could do it too".

This suggests that robots like Ace offer a way to learn new techniques and skills to improve our own performance in certain fields.

Related: Scientists Grew Mini Brains, Then Trained Them to Solve an Engineering Problem

The robot is yet to reach the level of proficiency in table tennis demonstrated by agents operating in virtual environments, such as AlphaGo or DeepBlue. It does, however, significantly advance what we may be able to do with robotics in the future, the researchers say.

"This breakthrough is much bigger than table tennis," Sony AI chief scientist Peter Stone says.

"It represents a landmark moment in AI research, showing, for the first time, that an AI system can perceive, reason, and act effectively in complex, rapidly changing real-world environments that demand precision and speed. Once AI can operate at an expert human level under these conditions, it opens the door to an entirely new class of real-world applications that were previously out of reach."

Now, hands up if you want to see two Ace robots square up against each other.

The paper has been published in Nature.