Spiders rely significantly on touch to sense the world around them. Their bodies and legs are covered in tiny hairs and slits that can distinguish between different kinds of vibrations.

Any prey blundering into a web makes a very different vibrational clamor from another spider coming a-wooing, or the stirring of a breeze, for example. Each strand of a web produces a different tone.

A few years ago, scientists translated the three-dimensional structure of a spider's web into music, working with artist Tomás Saraceno to create an interactive musical instrument, titled Spider's Canvas.

In 2021, the team refined and built on that previous work, adding an interactive virtual reality component to allow people to enter and interact with the web.

This research, the team said, will not only help them better understand the three-dimensional architecture of a spider's web, but may even help us learn the vibrational language of spiders.

"The spider lives in an environment of vibrating strings," said engineer Markus Buehler of MIT. "They don't see very well, so they sense their world through vibrations, which have different frequencies."

When you think of a spider's web, you most likely think of the web of an orb weaver: flat, round, with radial spokes around which the spider constructs a spiral net. Most spiderwebs, however, are not of this kind, but built in three dimensions – like sheet webs, tangle webs, and funnel webs, for example.

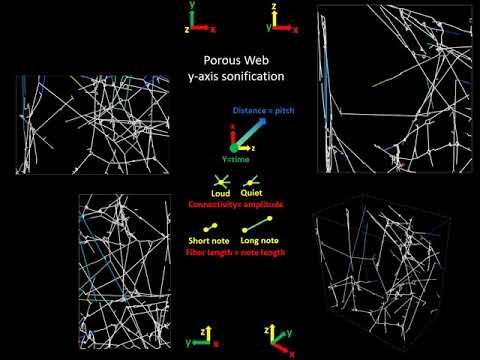

To explore the structure of these kinds of webs, the team housed a tropical tent-web spider (Cyrtophora citricola) in a rectangular enclosure, and waited for it to fill the space with a three-dimensional web. Then they used a sheet laser to illuminate and create high-definition images of 2D cross-sections of the web.

A specially developed algorithm then pieced together the 3D architecture of the web from these 2D cross sections. To turn this into music, different sound frequencies were allocated to different strands. The notes thus generated were played in patterns based on the web's structure.

They also scanned a web while it was being spun, translating each step of the process into music. This means that the notes change as the structure of the web changes, and the listener can hear the process of the web's construction; having a record of the step-by-step process means we can also better understand how spiders build a 3D web without support structures – a skill that could be used for 3D printing, for example.

Spider's Canvas allowed audiences to hear the spider music, but the virtual reality, in which users could enter and play strands of the web themselves, adds a whole new layer of experience, the researchers said.

"The virtual reality environment is really intriguing because your ears are going to pick up structural features that you might see but not immediately recognize," Buehler explained.

"By hearing it and seeing it at the same time, you can really start to understand the environment the spider lives in."

This VR environment, with realistic web physics, allows researchers to understand what happens when they mess with parts of the web, too. Stretch a strand, and its tone changes. Break one, and see how that affects the other strands around it. This, too, can help us understand the architecture of a spider's web, and why they are built the way they are.

And, perhaps most fascinatingly, the work enabled the team to develop an algorithm to identify the types of vibrations of a spider's web, translating them into "trapped prey", or "web under construction", or "another spider has arrived with amorous intent". This, the team said, is groundwork for the development of learning to speak spider – at least, tropical tent-web spider.

"Now we're trying to generate synthetic signals to basically speak the language of the spider," Buehler said.

"If we expose them to certain patterns of rhythms or vibrations, can we affect what they do, and can we begin to communicate with them? Those are really exciting ideas."

The team presented their work at the 2021 spring meeting of the American Chemical Society. Their previous research was published in 2018 in the Journal of the Royal Society Interface.

A version of this article was first published in April 2021.