Scientists have predicted that unless radical improvements are made in the way we design computers, by 2040, computer chips will need more electricity than what our global energy production can deliver.

The projection could mean that our ability to keep pace with Moore's Law – the idea that the number of transistors in an integrated circuit doubles approximately every two years – is about to slide out of our grasp.

The prediction about computer chips outpacing electricity demand was originally contained in a report released late last year by the Semiconductor Industry Association (SIA), but it's hit the spotlight now, due to the group issuing its final roadmap assessment on the outlook for the semiconductor industry.

The basic idea is, that as computer chips become ever more powerful thanks to their greater transistor counts, they'll need to suck more power in order to function (unless efficiency improves).

Semiconductor manufacturers can counter this power draw by clever engineering, but the SIA says there's a limit to how far this goes in current methods.

"Industry's ability to follow Moore's Law has led to smaller transistors but greater power density and associated thermal management issues," the 2015 report explains.

"More transistors per chip mean more interconnects – leading-edge microprocessors can have several kilometres of total interconnect length. But as interconnects shrink they become more inefficient."

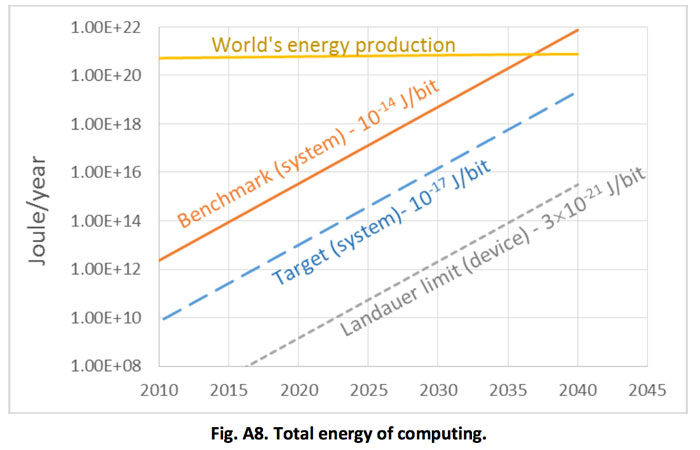

In the long run, the SIA calculates that, at the rate things are going using today's approaches to chip engineering, "computing will not be sustainable by 2040, when the energy required for computing will exceed the estimated world's energy production".

You can see the problem graphed in the image below, with the power draw of today's mainstream systems – the benchmark line, represented in orange – eclipsing the world's projected energy production sometime between 2035 and 2040.

These days, chip engineers stack ever-smaller transistors in three dimensions in order to improve performance and keep pace with Moore's Law, but the SIA says that approach won't work forever, given how much energy will be lost in future, progressively denser chips.

SIA

SIA

"Conventional approaches are running into physical limits. Reducing the 'energy cost' of managing data on-chip requires coordinated research in new materials, devices, and architectures," the SIA states.

"This new technology and architecture needs to be several orders of magnitude more energy efficient than best current estimates for mainstream digital semiconductor technology if energy consumption is to be prevented from following an explosive growth curve."

The challenge then is well and truly on for today's computer engineers and scientist, with the SIA's new roadmap report also advising that, beyond 2020, it will become economically unviable to improve chip performance by traditional scaling methods, such as shrinking transistors.

It's a huge ask, but the next leaps in computing efficiency and research might need to come then from areas not strictly related to transistor counts – and hopefully the spirit, if not the specifics, of Moore's Law continues in the coming decades.

"That wall really started to crumble in 2005, and since that time we've been getting more transistors but they're really not all that much better," computer engineer Thomas Conte from Georgia Tech told Rachel Courtland at IEEE Spectrum.

"This isn't saying this is the end of Moore's Law. It's stepping back and saying what really matters here – and what really matters here is computing."