Neuroscientists have found evidence that our brains could have 10 times the memory capacity previously thought, bringing the total amount - in computer terms - to at least 1 petabyte (1 million GB) of storage space.

That's equivalent to the memory capacity of roughly 31,250 iPhone 7s (the 32 GB kind) - all in the human brain.

"This is a real bombshell in the field of neuroscience," says one of the researchers, Terry Sejnowski from the Salk Institute for Biological Studies.

"Our new measurements of the brain's memory capacity increase conservative estimates by a factor of 10 to at least a petabyte - in the same ballpark as the World Wide Web."

To be clear, despite scientists often explaining our brain's capacity into computer terms, our brains are far more complex and flexible than a hard drive, and function in a very different manner.

Our brains work by encoding memory into electrical pulses by firing neurons in different areas of the brain, and creating a complex web of pulses that encode our thoughts and experiences.

This means memory is distributed all over the brain - unlike a computer, which just has one specific location for each file.

As Robert Epstein points out at Aeon, the human brain doesn't store words or the rules that tell it how to manipulate them, and it doesn't create representations of visual stimuli, store them in a short-term memory buffer, and then transfer it into a long-term memory device.

"Computers do all of these things, but organisms do not," he says.

But the metaphor of computer storage is a useful way to put some of the power of our brains capacity into perspective - and it turns out, that capacity is bigger than previously thought, because there's even more diversity in our synapses than we thought.

Sejnowski and his team reconstructed the hippocampus - an area of the brain commonly associated with long-term memory - of a rat using 3D computer modelling to investigate its memory function.

"When we first reconstructed every dendrite, axon, glial process, and synapse from a volume of hippocampus [that was] the size of a single red blood cell, we were somewhat bewildered by the complexity and diversity amongst the synapses," says one of the team, Kristen Harris from the University of Texas, Austin.

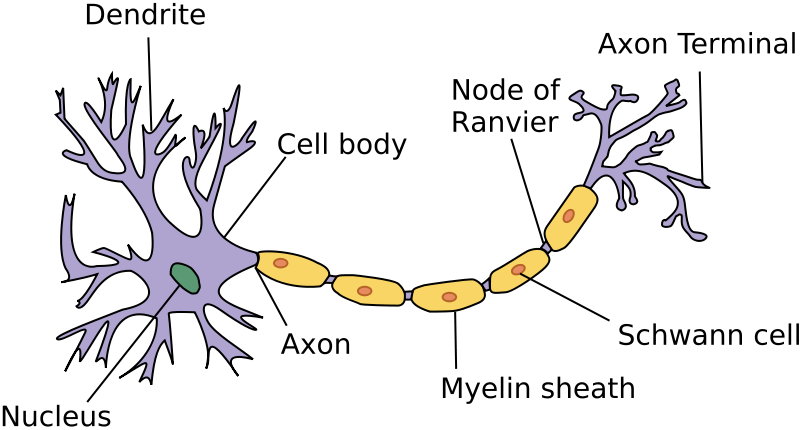

While doing this, the team saw that a single axon connected to two synapses led to one dendrite - the spiky part of a nerve cells - meaning the neuron was likely sending duplicate messages.

Anatomy and Physiology/Wikimedia

Anatomy and Physiology/Wikimedia

While this type of connection is fairly common - occurring in roughly 10 percent of connections in the hippocampus of rats - it's not well understood. So the team delved further to see if synaptic size played a bigger role than previously thought.

Using newly developed algorithms and microscopy techniques, the team reconstructed these synapses at the nanomolecular level, allowing them to see them in more detail than ever before.

It turned out that the duplicate synapses were identical in just about every way.

"We were amazed to find that the difference in the sizes of the pairs of synapses were very small, on average, only about 8 percent different in size. No one thought it would be such a small difference. This was a curveball from nature," says team member Tom Bartol, from the Salk Institute.

This 8 percent difference is crucial - before now, there were only three listed sizes of synapse: small, medium, and large.

While these three sizes work well for ordering a cup of coffee or buying a t-shirt, synapse size is linked to how much memory capacity a single synapse has.

That means if they can come in more than three sizes - differing from each other by as little as 8 percent - the brain could store a lot more than once thought using the less advanced measurements.

"This is roughly an order of magnitude of precision more than anyone has ever imagined," Sejnowski explains.

The team suggests that synapses change size to fit the signal they're being sent, allowing them to be more versatile.

"This means that every 2 or 20 minutes, your synapses are going up or down to the next size. The synapses are adjusting themselves according to the signals they receive," says Bartol.

It's important to note that the study was only conducted on rats, and until the research is applied to humans, there's no way of knowing if the results can be replicated.

But it's hoped that these new insights will spur a better understanding of crucial processes in the brain, and could be used by computer scientists to make more efficient systems based on brain memory.

"The implications of what we found are far-reaching," Sejnowski says. "Hidden under the apparent chaos and messiness of the brain is an underlying precision to the size and shapes of synapses that was hidden from us."

The team's work was published in eLife.