Forget using healing brushes or copying and patching scraps of images with Adobe Photoshop – a revolutionary new AI program might soon let you seamlessly reconstruct entire missing sections of an image in a way that needs to be seen to be believed.

The US tech company Nvidia has come up with a novel method for editing complex images that uses neural networking to fill in the blanks, resulting in a far more realistic picture in a fraction of the time required by current programs.

Erasing the ex from your family portrait currently requires some clever use of a handful of tools that use the surrounding pixels to estimate the colours and patterns that go inside their cut-out silhouette.

In addition to these 'valid' outside pixels, they sample the copies inserted into the cut-out in order to combine them into a best-guess background pattern.

For the most part this works surprisingly well. But that double-dipping of pixels can also produce grainy artefacts and fuzzy smearing effects that require fixing in post-production.

Seasoned digital artists can make light work of this by touching up the image, but it still takes time that is better spent elsewhere.

Nvidia went back to the drawing board on how to efficiently pick the right pixels to fill in a hole of missing detail in order to integrate with the surrounding pattern. Check out the clip below to get an idea of what it looks like.

This new inpainting process not only uses 'valid' pixels to determine what details go where, but also uses the deep learning power of a trained neural network to make a much better guess on how they should be arranged.

This is a little like teaching an art student how to paint based on their experiences of art galleries.

Little by little, through learning the rules, they pick up the tricks of the trade until their clumsy blotches turn into masterpieces.

As clever as this process is, even the best artificial intelligence can fail to grasp all of the details. Just look at the nightmares Google's Deep Dream network gives birth to! Or don't.

More recently an artist named Robbie Barrat used a similar classically trained AI process to generate nudes that are, well, let's say aren't exactly NSFW.

To improve on this, Nvidia applied a novel mathematical approach to change the way AI compares pixels inside the cut-out zone with those outside, making for a more normal, blended look.

The exact details of this process for the tech-minded can be found on the pre- peer review website arxiv.org.

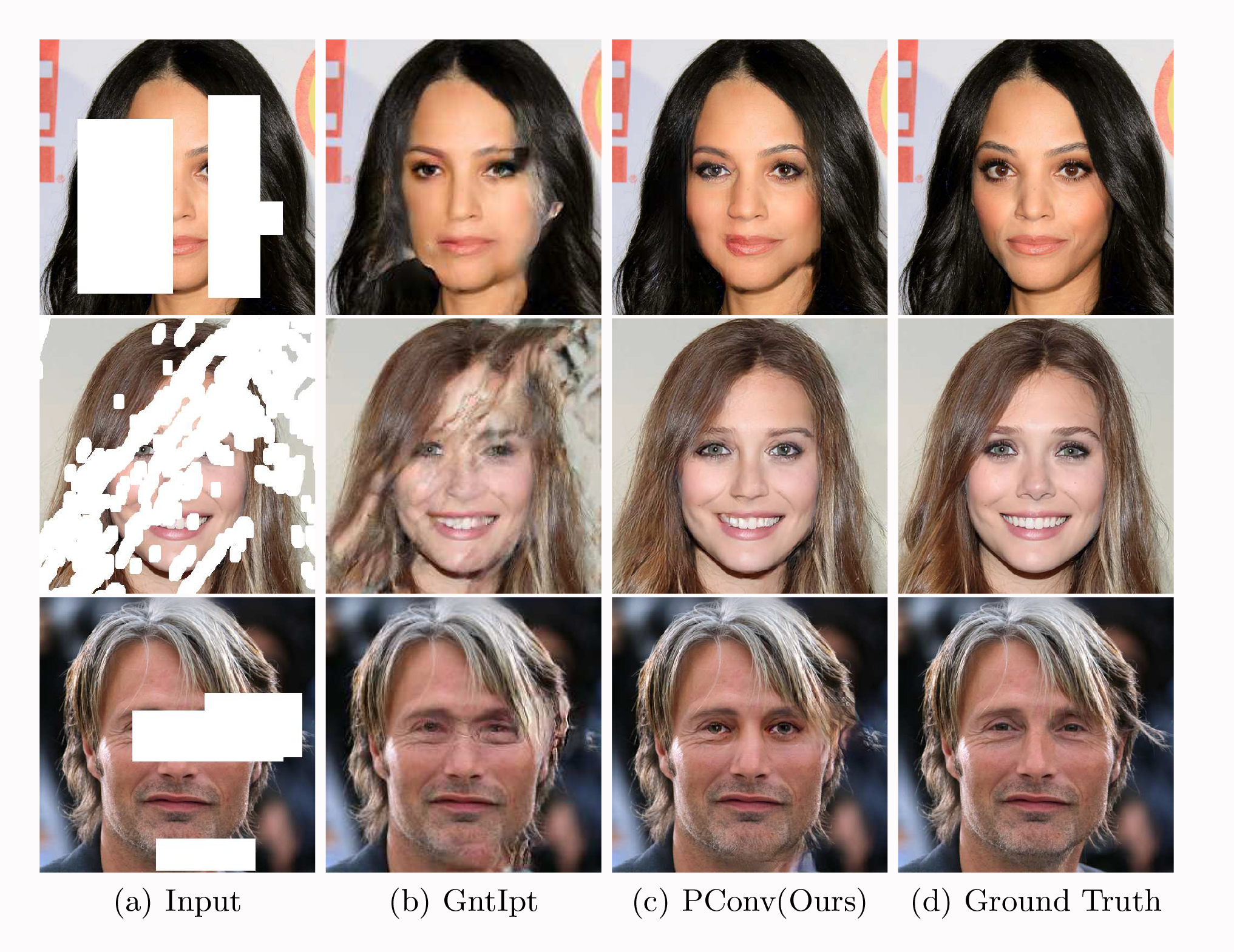

Have a look at the examples below that compare existing approaches (b) with Nvidia's AI (c) and untouched originals (d).

(NVIDIA Corporation)

(NVIDIA Corporation)

Sure, it's still not always perfect, but it's a huge leap forward in editing technology.

This will be a big win for artists, but for those of us trying to work out whether a picture is authentic or a fabrication it's one more headache.

Last year, Nvidia developed a process that could use what's known as a generative adversarial network (GAN) to whip up photo-realistic images of fake people.

Then they used the power of GAN to compare two landscapes and add key elements that exist in one but not the other, changing things like the weather or time of day.

In an age where we can put incriminating words into the mouths of past presidents, and potentially even synthesise a realistic voice to match, we just have to accept once and for all that nothing we see should be taken at face value.

Except for that space in your family portrait. Ex? What ex?